Salesforce Data Cloud Architecture: A Complete Guide for Developers

Salesforce Data Cloud offers a robust architecture for unifying customer data in real time, empowering developers to build scalable CRM applications. This guide summarises key architectural components, developer workflows, and best practices for implementation.

Table of Contents

Salesforce Data Cloud Architecture Overview

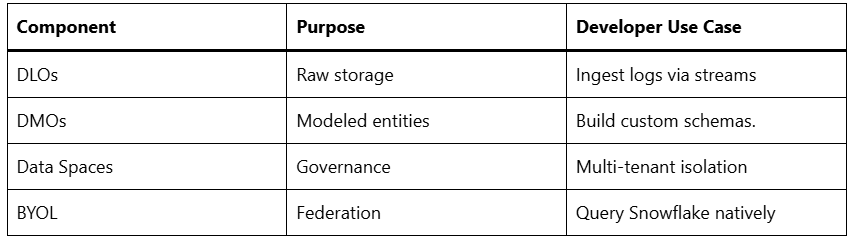

Salesforce Data Cloud uses a zero-copy lakehouse model that blends data lakes and warehouses for petabyte-scale storage without data duplication. It integrates seamlessly with Salesforce clouds like Sales Cloud and Service Cloud via Apache Parquet storage, Amazon S3 for cold data, and DynamoDB for real-time processing. This setup allows data to flow both ways through zero-copy integration and Bring Your Lake (BYOL) federation, enabling queries on external warehouses like Snowflake or Redshift as if they were part of the system.

Key pillars include data ingestion, harmonisation, identity resolution, activation, and AI-driven intelligence. Unlike traditional ETL pipelines, it processes data in real time using metadata-driven schemas, enabling Customer 360 views across silos.

Data Ingestion Layer

Ingestion starts with connectors for Salesforce apps, external lakes, web/mobile SDKs, and third-party sources like AWS or Google BigQuery. Developers configure data streams in a visual UI to pull raw data into Data Lake Objects (DLOs), which store unstructured or semi-structured data in Parquet format.

Zero-ETL capabilities make data ingestion automatic, handling huge amounts of data while ensuring proper access control through Data Spaces, which are organised areas for managing permissions by brand, region, or department. For developers, APIs like the Data Cloud Ingestion API allow programmatic streaming, with SOQL support for querying ingested data.

Data Harmonization and Modelling

Raw DLOs map to the Customer 360 Data Model, a pre-built schema with standard objects (e.g., Individual, Party, Engagement) and relationships. Developers create Data Model Objects (DMOs) using Salesforce Data Pipelines, a low-code tool with functions for transformations like normalisation or enrichment.

Data mapping links DLO fields to DMOs, ensuring semantic consistency for downstream apps. External Data Lake Objects (EDLOs) federate off-platform data via BYOL, treating it as local without movement. This layer uses a unified metadata store for querying across hybrid sources.

Identity Resolution Engine

At the heart is deterministic and probabilistic identity resolution, stitching profiles from disparate IDs (email, phone, and CRM IDs) into a 360-degree view. Developers customise rules via a no-code UI or Apex for matching logic, handling merges and real-time updates.

Resolved profiles power segments for activation, with privacy controls like consent management baked in. This feature enables zero-party data unification, critical for GDPR/CCPA compliance in multi-cloud setups.

Activation and Intelligence Layer

Data flows to Salesforce apps (such as Marketing Cloud and Tableau) or external systems through APIs and Flows. Real-time activation uses calculated insights—server-side segments updated live for personalisation.

Einstein AI integrates natively for predictions, recommendations, and generative AI on unified data. Developers extend via Data Cloud APIs (e.g., Query API, Segment API) or embed in Lightning components, supporting usage-based pricing to optimise costs.

Developer Tools and APIs

Primary APIs include:

- Ingestion API: Streams events from apps or IoT.

- Query API: SQL-like queries on DMOs/EDLOs.

- Profile API: Accesses resolved individuals.

- Segment API: Manages activation audiences.

Use VS Code with Salesforce CLI extensions for setup; Flows and Apex integrate Data Cloud objects into core Salesforce. Real-time features leverage pub/sub messaging for event-driven apps, allowing for immediate data updates and interactions between components as events occur.

Implementation Best Practices

Start with a proof-of-concept: ingest sample data, map to DMOs (Data Management Objects), resolve identities, and activate a segment. Design for scale partition data spaces, monitor ingestion quotas, and use direct joins over federated queries to cut latency.

Security features include field-level encryption and role-based access; test with sandbox orgs. Common pitfalls include excessively relying on federation without local copies for high-velocity data or neglecting pricing (per-row ingested/queried).

Use Cases for Developers

- Personalised Journeys: Real-time segments for dynamic web content.

- AI Apps: Train Einstein models on harmonised data.

- Cross-cloud sync refers to the ability to share data without creating additional copies, using MuleSoft, which is a platform for building application networks, to facilitate hybrid integrations.

Performance and Scaling

The system manages petabytes of data through columnar storage and indexing, allowing for sub-second queries. Developers optimise with materialised views for frequent segments and async processing for heavy transforms.

This architecture positions Data Cloud as a developer-friendly platform for real-time Customer Relationship Management (CRM) innovation, blending hyperscale data with Salesforce’s low-code ecosystem.